Founder-Led MVP Testing in 2026: A Practical Setup

Founder-led testing is one of the highest-leverage things you can do before and right after launch — especially if you don’t have a big team. In 2026, tools make it easy to record sessions and ship fast, but most MVPs still fail because founders test the wrong thing or don’t turn feedback into decisions. This guide gives you a simple testing setup: recruiting, scripts, session structure, note-taking, and a weekly loop that turns insights into shipped improvements.

TL;DR: Run short, founder-led tests that focus on one outcome: can a new user reach value without help? Record everything, measure a few key events, and treat every session as a scope-cutting tool. In 2026, the winning pattern is not “more feedback”— it’s faster cycles from observed behavior to a shipped fix.

Why founder-led testing matters more in 2026

Shipping is faster. That’s exactly why testing is more important.

When building is cheap, the real cost becomes building the wrong thing for two extra weeks.

Founder-led testing gives you:

- direct exposure to real user confusion

- faster decisions about what to cut

- clearer messaging (because you hear what users actually say)

- confidence before you spend on paid acquisition

If you want a broader view of why MVPs still miss despite “fast development,” read Why MVPs Still Fail in 2026.

What you should test (and what you should stop testing)

Founder-led testing is not “ask people what they want.”

Test behavior.

Test these MVP basics

- Can the user explain what the product is within 10–20 seconds?

- Can they complete the core flow end-to-end?

- Do they reach a clear “aha” moment?

- Do they trust the product enough to take the next step?

Avoid these early traps

- feature wishlists (they inflate scope)

- long opinion debates about design style

- testing ten flows at once

If you need a reminder on keeping scope tight when feedback starts exploding, see How to Prioritize Features When You’re Bootstrapping Your Startup.

The 2026 testing setup: the minimum stack

You don’t need fancy research operations.

You need three things:

- A way to schedule and talk to users

- A way to record and store sessions

- A consistent way to capture outcomes and decisions

Everything else is optional.

Recruiting: who to test with (and how many)

In early MVP testing, you’re not looking for statistical truth.

You’re looking for repeated friction.

A practical target:

- 5 sessions per round for one audience

- repeat in weekly cycles

Recruit from:

- people who already expressed the problem (warm leads)

- communities where your ICP hangs out

- outbound messages to a tight profile

Founder rule: don’t test with friends who want to be nice.

The session structure that works (30 minutes)

Keep it short and structured.

1) Context (3–5 minutes)

Ask:

- “What problem are you trying to solve today?”

- “How do you solve it now?”

This frames what “value” should look like.

2) The task (15–20 minutes)

Give one clear task:

- “Try to get to the result you would want from this product.”

Then shut up.

Observe where they:

- hesitate

- misread buttons

- ask “what does this mean?”

- abandon a step

3) Debrief (5–8 minutes)

Ask:

- “What did you think would happen?”

- “What felt unclear?”

- “What would make this a no-brainer for you?”

Avoid leading questions.

What to write down (and how to avoid messy notes)

Don’t capture everything. Capture decision material.

In each session, record:

- time to first value moment

- where they got stuck (exact screen/step)

- what they assumed (their mental model)

- what fixed it (if they figured it out)

Then label each issue as one of:

- messaging problem (they didn’t understand the promise)

- UX problem (they couldn’t find the next step)

- product problem (the flow doesn’t match reality)

- trust problem (they didn’t feel safe to proceed)

This categorization makes prioritization easier.

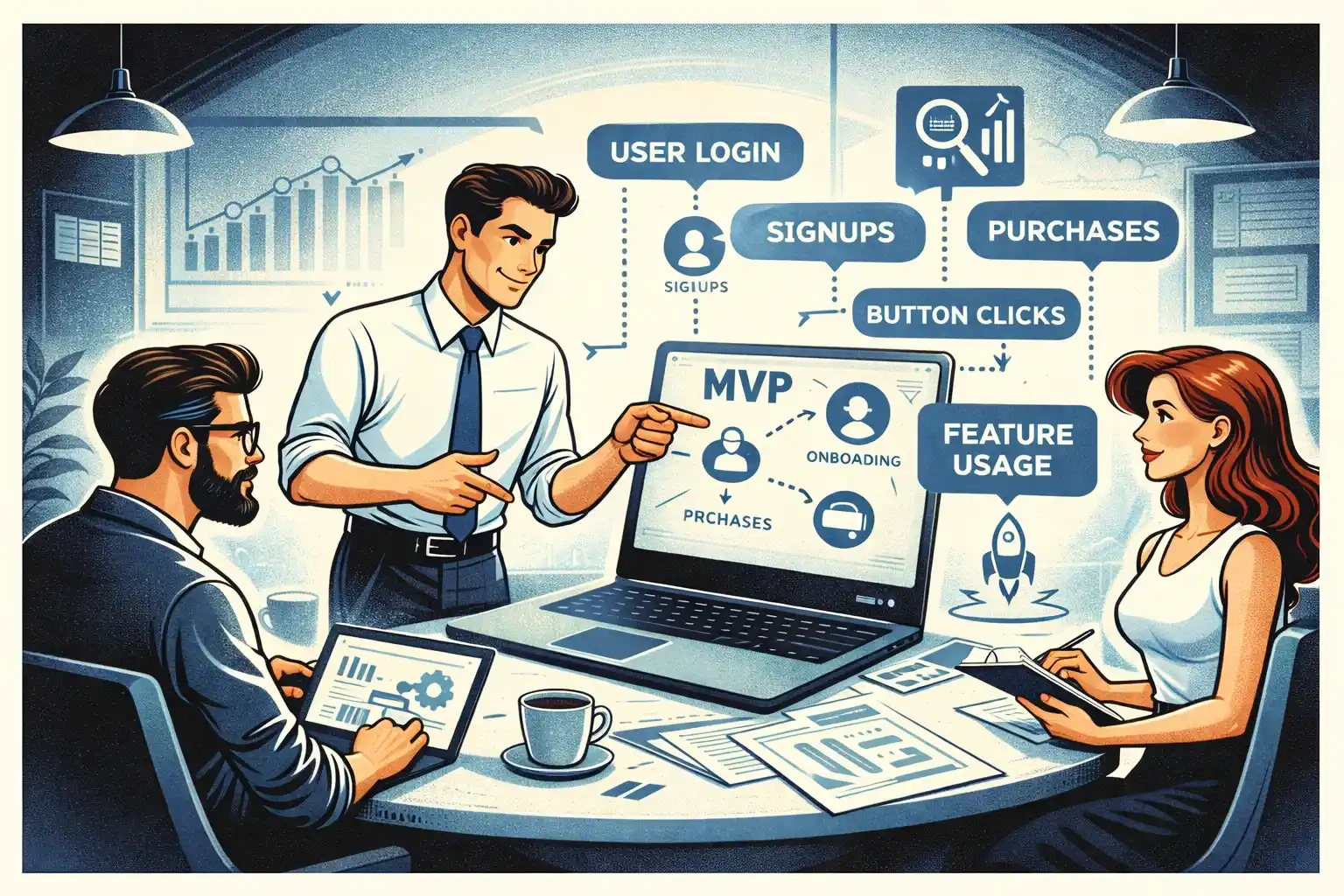

Analytics: pair sessions with a few events

Testing without analytics is slow.

Analytics without testing is misleading.

The MVP-friendly mix:

- founder-led sessions to understand “why”

- core events to confirm “how often”

If you need the event plan that fits early-stage products, read MVP Analytics in 2026: Events to Track Early.

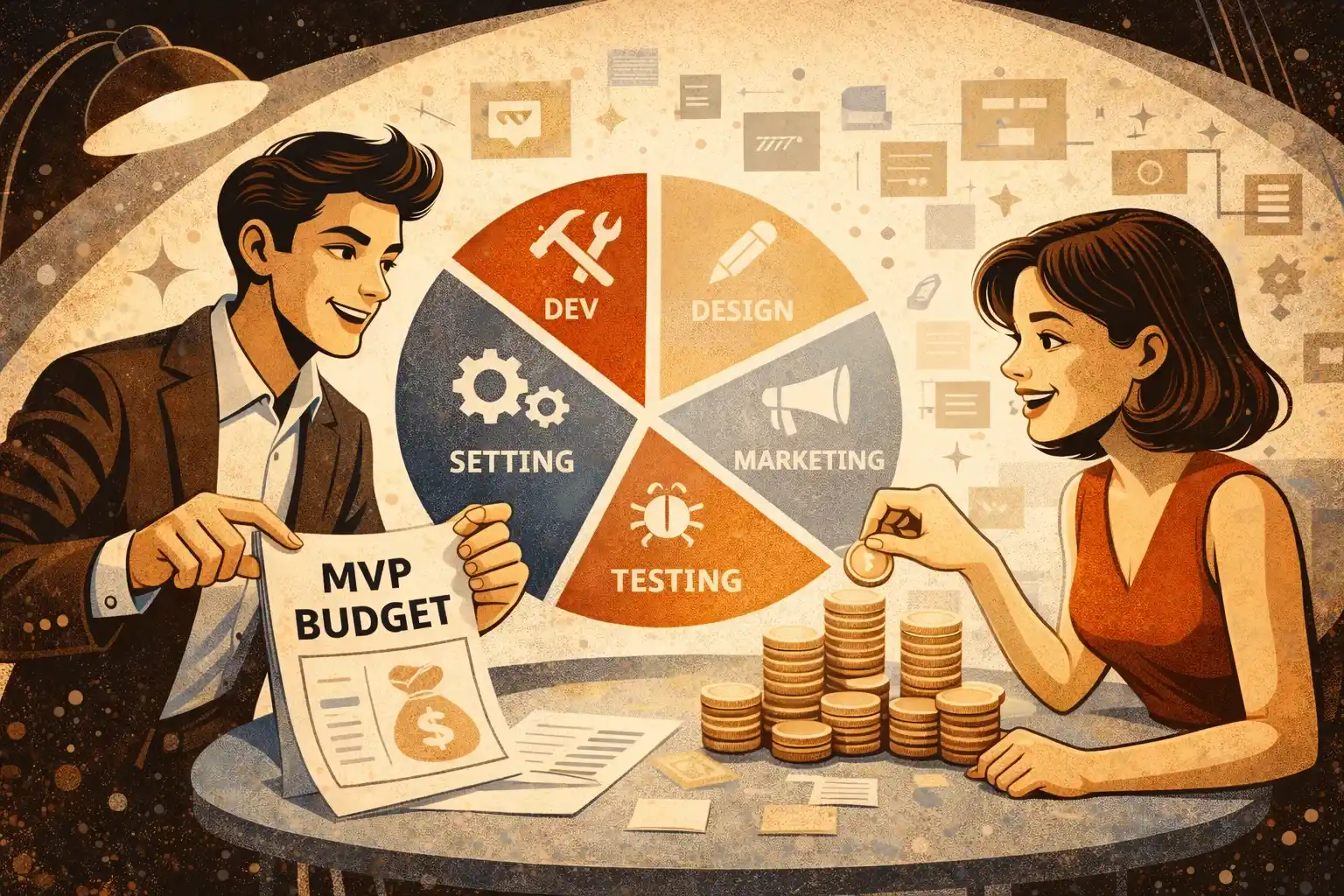

The weekly loop: test - decide - ship

Founder-led testing only works if it turns into shipped changes.

A simple weekly rhythm:

- Monday–Tuesday: run 3–5 sessions

- Wednesday: pick the top 1–3 fixes (not 10)

- Thursday: ship fixes

- Friday: rerun 1–2 sessions to confirm improvement

This is how you compound learning.

How AI helps in testing (without replacing judgment)

AI can accelerate testing by:

- summarizing transcripts

- clustering repeated issues

- drafting change proposals (“rewrite this onboarding step”)

But AI can’t decide tradeoffs.

You still need to choose:

- what to cut

- what to simplify

- what to delay

Treat AI as a compression tool, not a product manager.

What “good testing” produces

After a testing round, you should have:

- a clearer MVP scope (usually smaller)

- a short list of the biggest blockers

- a few high-impact UX and copy changes

- a stronger activation path

- a cleaner next set of questions

If your testing produced a 30-item backlog, you ran it like a brainstorming session, not a decision tool.

If you have no developer: still test

You can run founder-led testing before code exists:

- prototype tests (clickable Figma)

- concierge tests (manual delivery of the outcome)

- landing page tests for messaging

Thinking about building a usable MVP in 2026?

At Valtorian, we help founders design and launch modern web and mobile apps — including AI-powered workflows — with a focus on real user behavior, not demo-only prototypes.

Book a call with Diana

Let’s talk about your idea, scope, and fastest path to a usable MVP.

FAQ

How many users should I test with for an MVP?

Start with 5 sessions per round. You’re looking for repeated friction patterns, not statistically perfect results.

What’s the best length for a founder-led testing session?

30 minutes is enough: a short context check, one task flow, and a quick debrief.

Should I test a prototype or a live product?

Both work. Prototype testing is great for flow and messaging. Live product testing is best for real activation, trust, and edge cases.

What if users ask for lots of extra features?

Treat feature requests as signals of unmet outcomes, not as a to-do list. Translate them into the underlying problem, then decide what supports the core MVP outcome.

What’s the fastest way to improve the MVP after testing?

Fix the biggest activation blockers first: confusing value promise, unclear next step, missing trust signals, or steps that don’t match real behavior.

Do I need analytics if I’m running live user sessions?

Yes. Sessions explain “why,” events show “how often.” Together they help you prioritize changes confidently.

How do I keep testing from turning into endless research?

Run it in weekly cycles with a shipping rule: every round must produce 1–3 shipped improvements and a clear next question.

.webp)

.webp)

.webp)