How Accurate Is ChatGPT in 2026? Long Context, Settings, and Product Risk

ChatGPT is now part of product design, support, research, internal workflows, and user-facing features, which makes one question more important than ever: how accurate is it when real startup decisions depend on the output? The answer is not simply “good” or “bad.” Accuracy changes depending on the task, the context you provide, the settings and guardrails around the workflow, and how much risk sits behind the result. This article breaks that down for founders who want to use AI responsibly without slowing down product progress.

TL;DR: ChatGPT can be very useful in 2026, but its accuracy is highly context-dependent. It performs much better on structured drafting, summarization, pattern-based help, and constrained workflows than on ambiguous decisions, high-stakes judgment, or tasks where hidden context matters.

Why “accuracy” is the wrong starting question

Founders often ask whether ChatGPT is accurate as if accuracy were a single number. That is not how the problem works.

A model can be very good at one task and unreliable at another. It can perform well when the prompt is tight and the workflow is constrained, then become risky when the task depends on unstated assumptions, missing context, or real-world judgment.

That is why teams get misled. They test ChatGPT on a few impressive outputs and assume it is ready for broader use. Then they move it into a product flow, a support workflow, or a content pipeline where the failure conditions are completely different.

If you are thinking about AI inside startup workflows more broadly, AI at Work in 2026: Where It Helps and Where It Backfires is the natural starting point.

Where ChatGPT is usually accurate enough

In 2026, ChatGPT is usually strongest when the job is structured and the acceptable error range is low-impact.

That includes things like draft generation, rewriting, summarizing large amounts of text, extracting patterns from structured inputs, generating first-pass UX copy, organizing internal notes, or helping teams think through options faster. In these situations, the model often saves time even when the output still needs review.

It can also work well inside product workflows when the task is narrow. For example, helping classify support messages, suggesting labels, generating first drafts from templates, or assisting with repetitive internal operations can be reasonable use cases.

The key is that these are controlled environments. The output is either reviewed by a human or limited enough that a mistake does not quietly become a serious product risk.

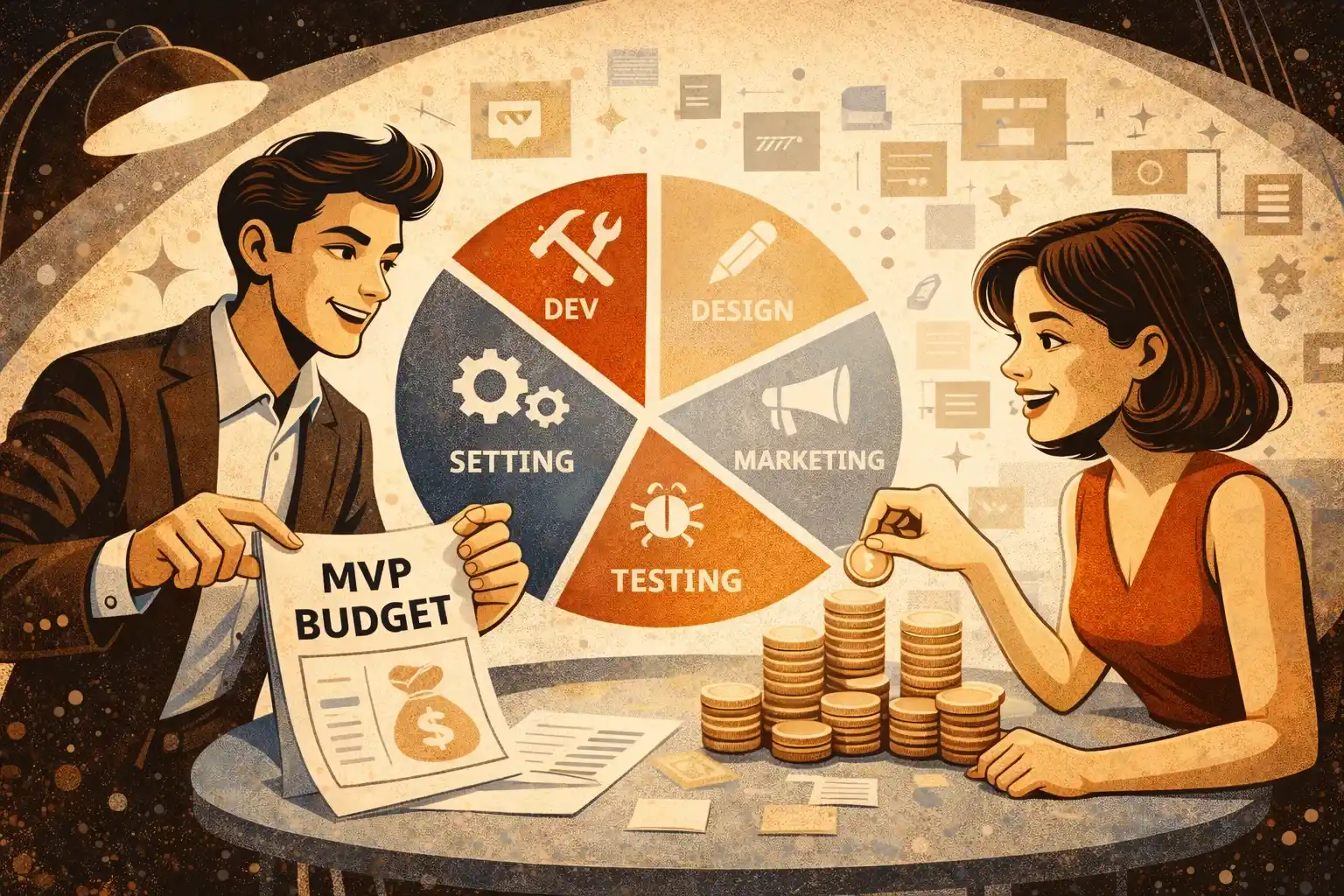

That logic overlaps with AI-Powered MVP Development: Save Time and Budget Without Cutting Quality.

Where accuracy starts to break down

Accuracy gets weaker when the task requires strong judgment, hidden domain context, real-time facts, or reliability under ambiguity.

If the model is asked to infer too much, fill in gaps, or make decisions that depend on business nuance it cannot truly understand, the output may still sound convincing while being wrong in important ways.

This becomes especially dangerous in product-facing flows. If ChatGPT is generating advice, recommendations, summaries, or decisions that users will rely on, you are no longer evaluating “nice draft quality.” You are evaluating trust.

The same applies to internal founder decisions. If the team starts using ChatGPT as a shortcut for product strategy, legal interpretation, pricing judgment, or critical prioritization, the risk rises fast.

This is closely related to AI Product Mistakes Startups Make in 2026.

Long context helps — but it does not solve the core problem

Long context windows improved a lot, and they do matter. If you provide more of the relevant conversation, the spec, the policy, the user history, or the workflow steps, the model can often produce more coherent and more relevant outputs.

But long context does not automatically create understanding. More text can improve continuity, yet it can also introduce noise, conflicting signals, or hidden assumptions that the model handles imperfectly.

Founders sometimes believe that if they just “feed more context,” the model will become safe enough for important workflows. That is not a reliable assumption.

Long context is helpful when it makes the task clearer. It is not a replacement for structure, validation, or product thinking.

If you are deciding how much complexity an early product should carry, How Much Architecture an MVP Needs in 2026 is also relevant here.

Settings matter less than workflow design

Many teams spend too much time thinking about settings and too little time thinking about workflow design.

Yes, parameters, prompt structure, retrieval layers, memory behavior, and model choice can affect output quality. But the bigger driver of real-world accuracy is usually the surrounding system: what input the model gets, what it is allowed to do, what it must not do, what happens when confidence is weak, and whether a human sees the result before it matters.

A badly designed workflow with a stronger model can still fail. A tightly designed workflow with limited responsibilities can often perform well enough, even if the model is not perfect.

That is why AI + Human Workflows in 2026: The Best Hybrid Pattern belongs in this conversation.

Product risk is the part founders underestimate

Most founders do not underestimate the model. They underestimate the consequences of where they place it.

If ChatGPT helps a team brainstorm feature names, the downside of a bad answer is tiny. If it drafts support responses and a person checks them, the risk is manageable. But if it shapes compliance-sensitive outputs, onboarding decisions, financial guidance, health-related messaging, or important user recommendations, the bar becomes completely different.

In those workflows, “mostly correct” may not be good enough. The risk is not just bad output. It is broken trust, incorrect decisions, support burden, and product behavior that becomes hard to explain after the fact.

This is one reason AI Reliability in 2026: How to Avoid Bad Outputs should sit next to any product decision involving model-generated outputs.

A founder framework for thinking about accuracy

Instead of asking whether ChatGPT is accurate, ask four better questions.

First, what exactly is the task? Drafting, classifying, summarizing, recommending, deciding, or advising are not the same thing.

Second, what happens if it is wrong? A weak first draft is not the same as a wrong user-facing recommendation.

Third, how controlled is the input? If your model is working from consistent structure, the outcome is usually more stable. If it is working from vague, messy, partial input, risk rises.

Fourth, who catches the failure? If no one sees the mistake before the user does, your tolerance for error should be much lower.

This kind of thinking fits well with Tech Decisions for Founders in 2026.

What founders should put in place before trusting it more

The safest teams do not trust ChatGPT more because the output looks good. They trust it more only after building checks around it.

That usually means narrowing the task, reducing ambiguity, limiting what the model can influence, testing real failure cases, creating fallback behavior, and deciding where human review remains mandatory.

It also means writing down rules around approved use, restricted use, and ownership. Once AI sits across multiple workflows, informal habits stop being enough.

That is exactly where AI Usage Policy in 2026: What Startups Should Put in Writing becomes relevant.

What this means for startup products in 2026

For most startups, ChatGPT is accurate enough to be useful in some parts of the business and some parts of the product. It is not accurate enough to be treated as a silent authority layer.

The strongest products are usually the ones that keep the model in a constrained role. They let it assist, draft, suggest, summarize, or accelerate — but they do not ask it to carry the full burden of judgment where product trust is at stake.

From a founder perspective, this is not pessimism. It is simply better system design.

Final thought

ChatGPT in 2026 is powerful, but power is not the same as reliability. Accuracy depends on the task, the context, the workflow design, and the cost of failure.

The smartest founders do not ask whether the model is good in general. They ask where it is good enough, where it needs guardrails, and where it should not be trusted to lead at all.

That is the difference between using AI as leverage and building product risk into your startup by accident.

Thinking about building an AI-powered MVP in 2026?

At Valtorian, we help founders design and launch modern web and mobile apps — including AI-powered workflows — with a focus on real user behavior, not demo-only prototypes.

Book a call with Diana

Let’s talk about your idea, scope, and fastest path to a usable MVP.

FAQ

Is ChatGPT accurate enough for startup work in 2026?

For some tasks, yes. It is often useful for drafting, summarizing, and structured assistance, but much less reliable for high-stakes judgment or ambiguous decisions.

Does long context make ChatGPT reliable?

It can improve relevance and continuity, but it does not remove the need for structure, validation, or review.

Are settings the main reason ChatGPT gets things wrong?

Usually no. Workflow design, missing context, and unclear task boundaries are often bigger reasons than settings alone.

Can ChatGPT be used in customer-facing product features?

Yes, but only with clear limits, failure handling, and a strong understanding of what happens when the output is wrong.

Where is product risk highest?

In flows tied to trust, money, health, legal meaning, compliance, or decisions users may rely on without question.

Should founders trust ChatGPT for strategy decisions?

It can help generate options or organize thinking, but it should not replace founder judgment or real user evidence.

What is the best way to improve reliability?

Constrain the task, structure the input, test failure cases, add human review where needed, and design fallback behavior early.

.webp)

.webp)

.webp)