AI Usage Policy in 2026: What Startups Should Put in Writing

Many startups are already using AI across research, writing, support, internal operations, and product workflows, but very few have written down clear rules for how AI should actually be used. That creates risk fast. In 2026, an AI usage policy is not just a legal or compliance document. It is a practical operating tool that helps founders set boundaries, reduce avoidable mistakes, and make sure the team uses AI in ways that protect users, data, and product quality without slowing down execution.

TL;DR: An AI usage policy helps startups decide what AI can be used for, what needs human review, what data should never be entered, and who is responsible when outputs are wrong.

Why startups suddenly need this in writing

A year or two ago, many teams treated AI use as an informal habit. People opened tools, experimented, copied outputs into docs, tested prompts, and moved on. In 2026, that is no longer enough.

AI is now involved in product ideation, marketing drafts, customer support, coding help, internal analysis, and sometimes even user-facing outputs. Once that happens across multiple people and workflows, the risk is no longer theoretical. Different team members start making different assumptions about what is allowed, what data is safe to use, and when human review is required.

That is why a written policy matters. It creates clarity before a mistake turns into a product problem, brand problem, or trust problem.

What an AI usage policy actually is

An AI usage policy is a simple written set of rules for how your team should and should not use AI. It does not need to sound legalistic. It needs to be useful.

At minimum, it should answer a few practical questions. What tools are approved? What kinds of tasks are okay to delegate to AI? What information should never be pasted into a model? When is human review mandatory? Who owns the final decision if an AI-generated output is wrong?

For early-stage teams, that is already enough to reduce a lot of confusion.

If you are still thinking more broadly about AI workflows, AI + Human Workflows in 2026: The Best Hybrid Pattern is a natural related read.

What should always be included

The first section should define approved use cases. For example, a startup may allow AI for brainstorming, rough drafting, summarization, customer support suggestions, internal research, or coding assistance, but not for final legal, medical, financial, or compliance decisions without review.

The second section should define restricted data. This is where the team writes down what should never be entered into AI tools: sensitive customer data, confidential business information, private contracts, raw financial details, unreleased strategy, or regulated information unless a specific approved setup exists.

The third section should define review rules. AI can help create output, but someone still needs to check whether that output is accurate, safe, biased, misleading, or simply low quality. The policy should say when review is optional and when it is mandatory.

The fourth section should define accountability. AI should never become the invisible decision-maker. A real person must still own the result.

What startups usually forget to write down

The most common gap is data handling. Teams often say “don’t share sensitive information,” but never define what counts as sensitive in their actual business.

Another gap is output verification. People assume everyone knows AI can hallucinate or oversimplify, but they still copy drafts into emails, content, support replies, or product specs too quickly.

A third gap is tool sprawl. Without a policy, people use random AI products across the company. That makes it harder to understand where information goes and what standards apply.

Startups also forget to define customer-facing transparency. If AI is involved in user-facing recommendations, summaries, assistant replies, or generated outputs, the team should decide when and how that should be disclosed.

This overlaps with AI Reliability in 2026: How to Avoid Bad Outputs.

How detailed should the policy be?

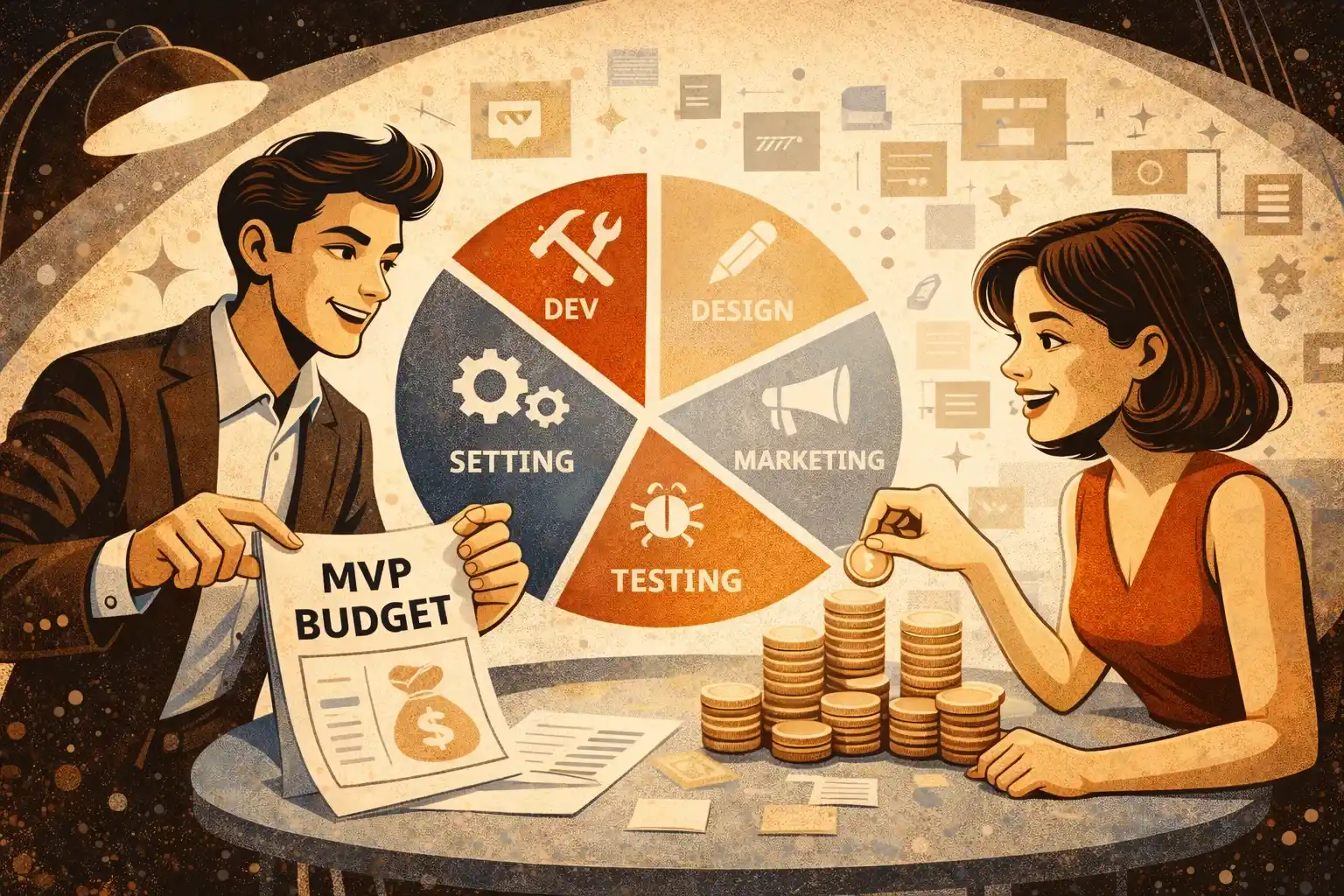

For most startups, shorter is better. A five-page practical document is usually more useful than a twenty-page policy nobody reads.

Early-stage founders should think in layers. Start with a short core policy that covers approved use, forbidden use, data boundaries, review rules, and ownership. Then add a few workflow-specific rules only where needed. For example, marketing may need one set of rules, engineering another, and customer support another.

The goal is not to create bureaucracy. The goal is to reduce preventable mistakes while keeping the team fast.

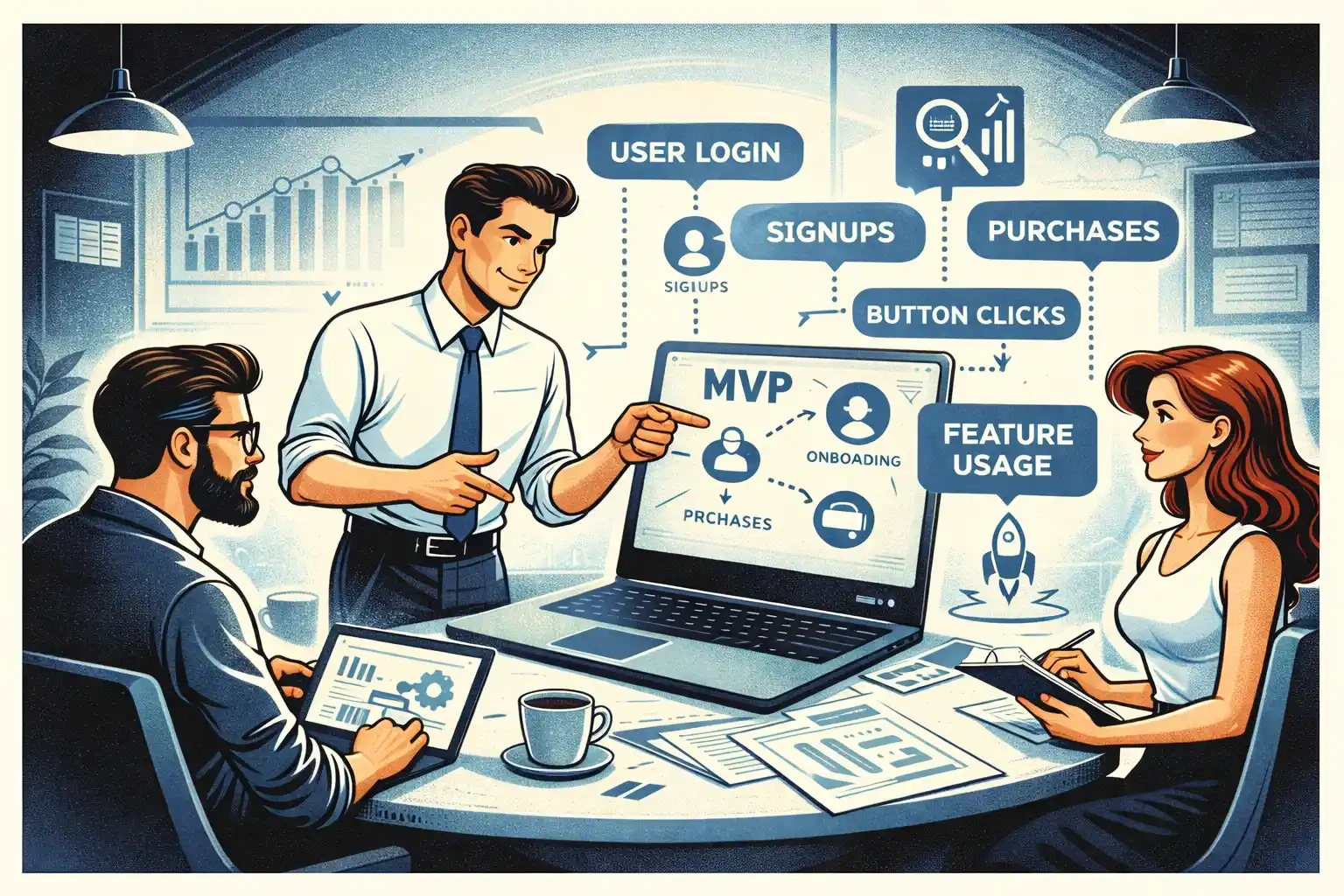

That logic is consistent with AI-Powered MVP Development: Save Time and Budget Without Cutting Quality.

What should be different for user-facing AI products

If your startup is building AI into the product itself, the policy needs one extra layer. Internal AI use is one thing. Product AI is another.

Once users see AI outputs, you need written decisions around fallback behavior, confidence, escalation, data boundaries, and whether users can rely on the output for anything important. The more serious the use case, the less acceptable it is to treat AI behavior as “we will fix it later.”

A startup building internal productivity tools may need a lightweight policy. A startup building AI into healthcare, fintech, hiring, education, or advice-like experiences needs much more care, even at MVP stage.

That is where AI MVP Features in 2026: What’s Worth Building becomes useful, because many risk problems begin with putting the wrong AI behavior into version one.

A simple founder framework for writing one

Start by listing every place your team currently uses AI. Not where you plan to use it — where you already use it.

Then divide those uses into three buckets: low-risk, review-required, and restricted.

Then write simple rules for each bucket. Low-risk might include brainstorming or rough internal drafts. Review-required might include external communication, specs, code, or research summaries. Restricted might include sensitive data, regulated decisions, or customer-facing outputs without safeguards.

Then name an owner. Someone on the founding or product side should be responsible for updating the policy as the company changes.

This is also where What Non-Technical Founders Should Know in 2026 fits well, because founders do not need to become AI governance experts, but they do need enough clarity to lead responsibly.

What founders should avoid

Do not write a generic policy copied from a large company. It will sound polished and still fail your real workflows.

Do not make the rules so strict that the team ignores them. If the policy blocks obvious low-risk uses, people will work around it.

Do not make the opposite mistake either. A vague policy like “use AI responsibly” is almost useless.

And do not forget to revisit it. A startup that begins with AI for internal drafting may six months later be using it in support, product operations, or user-facing features. The rules should evolve with reality.

This is closely related to Using AI in Startup Development in 2026.

What a good first version looks like

A good first version of an AI usage policy is short, specific, and readable in ten minutes. It usually includes:

A quick statement of purpose.

A list of approved tools.

A list of allowed use cases.

A list of restricted or forbidden use cases.

Clear data-sharing boundaries.

Human review requirements.

Ownership and update responsibility.

That is enough for most startups to begin using AI more intentionally instead of treating it like an unstructured shortcut.

Final thought

In 2026, an AI usage policy is not a “big company” document. It is an early operating decision.

For startups, the point is simple: keep the team fast, keep the outputs useful, and reduce avoidable risk before trust gets damaged. The best AI policy is not the one that sounds most official. It is the one people actually follow.

Thinking about building an AI-powered MVP in 2026?

At Valtorian, we help founders design and launch modern web and mobile apps — including AI-powered workflows — with a focus on real user behavior, not demo-only prototypes.

Book a call with Diana

Let’s talk about your idea, scope, and fastest path to a usable MVP.

FAQ

Do early-stage startups really need an AI usage policy?

Yes. Even a short policy helps prevent confusion around data, review, and accountability.

How long should an AI usage policy be?

Usually short. For most startups, a practical 3–5 page document is enough to start.

What is the most important rule to include?

Clear limits on what data can be entered into AI tools and when human review is required.

Should customer-facing AI be covered separately?

Usually yes. Internal AI usage and product-facing AI create different kinds of risk.

Who should own the policy inside a startup?

Usually a founder, product lead, or operations lead — someone close enough to real workflows to update it as the company evolves.

Should the policy list specific tools?

Yes. That makes expectations clearer and reduces tool sprawl across the team.

How often should it be updated?

Whenever AI starts being used in new workflows, new tools are approved, or the company begins exposing AI directly to users.

.webp)

.webp)

.webp)