How Agencies Build MVPs in 2026: Process Basics

In 2026, agencies can ship MVPs faster than ever—but speed doesn’t automatically mean the right product. Many founders hire an agency expecting a “done” app, then get weeks of progress and a version nobody uses. This article breaks down the modern agency MVP process in plain English: what happens in each phase, what you should see as outputs, and what decisions you (the founder) must make to keep momentum. You’ll also learn the most common traps and how to avoid paying for the wrong kind of “process.”

TL;DR: A strong agency MVP process in 2026 is simple: clarify the problem, cut to the smallest useful scope, design the key flow, build in short releases, track real user behavior, then iterate. You should never feel like you’re paying for meetings — each phase should end with visible outputs (prototype, backlog, working builds, metrics).

Why agency process matters more in 2026 (even though building is faster)

A lot of founders assume: “If an agency can build fast, the process doesn’t matter.”

But in 2026, the main risk isn’t whether your team can ship screens—it’s whether you ship something users actually adopt.

Modern agencies use AI and better tooling to move faster. The good ones use that speed to reduce waste. The bad ones use it to produce more output without better decisions.

If you want a bigger lens on the mindset shift, this article is a good companion: AI-Powered MVP Development: Save Time and Budget Without Cutting Quality.

What “a good agency” means (in practical terms)

Forget fancy decks. A good agency behaves like an operator team:

- They challenge scope and push you toward the smallest useful release

- They make tradeoffs explicit (time, cost, quality, risk)

- They ship in increments you can test

- They care about metrics and early user behavior

The core MVP process agencies use in 2026

No tables—so here’s the process as a simple walkthrough.

Phase 0: Kickoff (alignment, not “onboarding theatre”)

Goal: remove ambiguity and set working rules.

What should happen:

- Confirm product goal and target user in plain language

- Decide how decisions will be made (who signs off, how fast)

- Set a weekly cadence (demo day + feedback window)

- Set a single source of truth (scope doc / backlog / design file)

What you should receive:

- A one-page summary of goals, constraints, and success signals

- A timeline with checkpoints (not a promise, a plan)

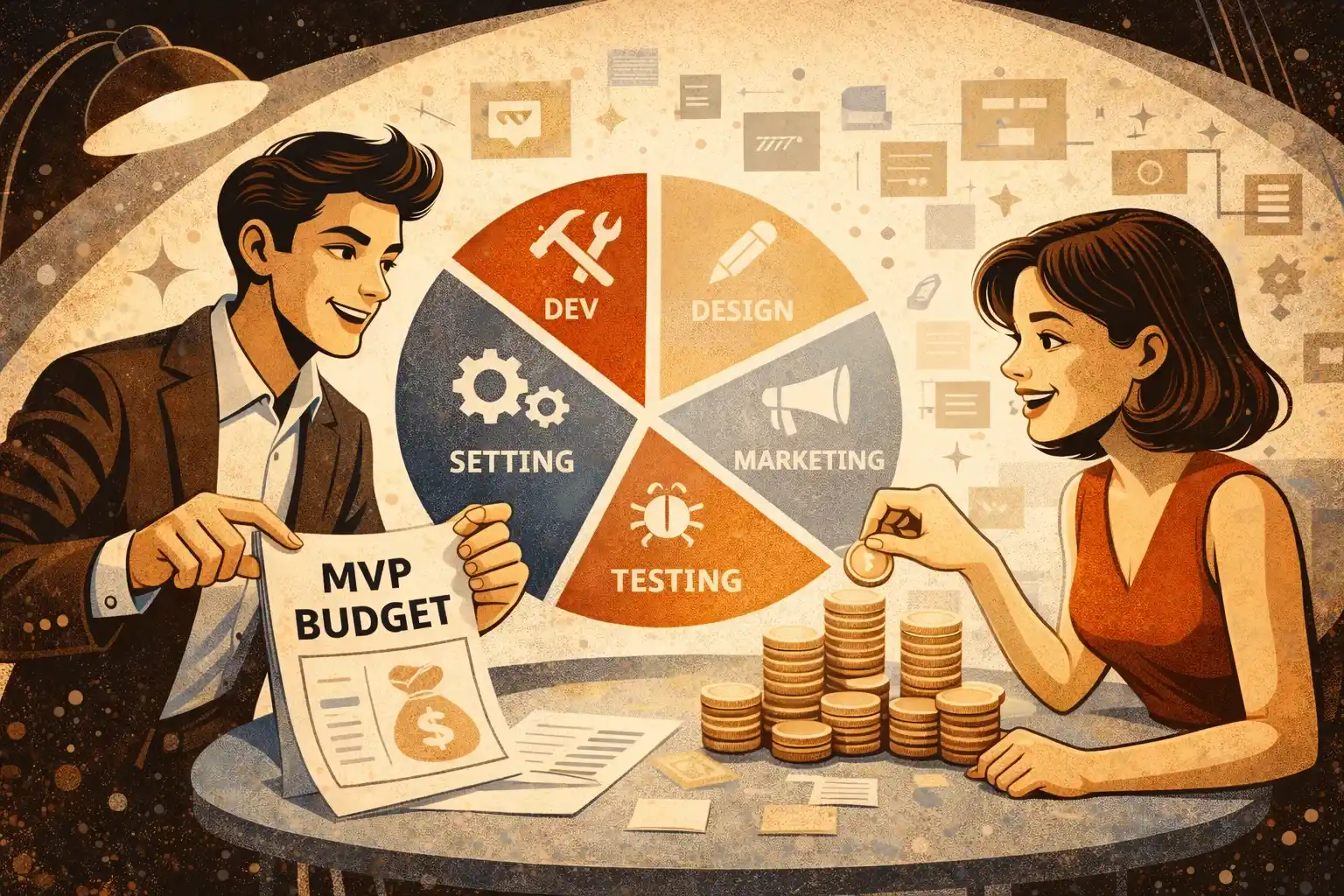

Phase 1: Discovery and scope (the part founders skip and regret)

Goal: define what “MVP” means for this product.

Good agencies do discovery quickly and practically. It’s not about research for research—it’s about cutting risk.

What should happen:

- Clarify the core user flow (2–5 flows, not 20)

- Define the MVP boundary: what’s in, what’s out

- List assumptions and risks (payments, permissions, moderation, integrations)

- Define first metrics (activation, retention proxy, revenue proxy)

What you should receive:

- A crisp scope with priorities and a “not now” list

- Clear user flows with edge cases

- A lightweight tech plan and risk list

- An estimate range tied to the scope

If you want a clear standard for what discovery should deliver, read Discovery Phase in 2026: What You Should Receive.

Phase 2: UX/UI design (reduce confusion before code)

Goal: make the product understandable for a first-time user.

What should happen:

- Build a clickable prototype of the key flow

- Confirm onboarding, empty states, and the “aha” moment

- Align on design system basics (buttons, typography, spacing)

What you should receive:

- A clickable prototype that covers the MVP flow

- A small UI kit / reusable components (even if minimal)

- A clear list of screens and states included in MVP

Important: design isn’t about aesthetics first — it’s about reducing rework.

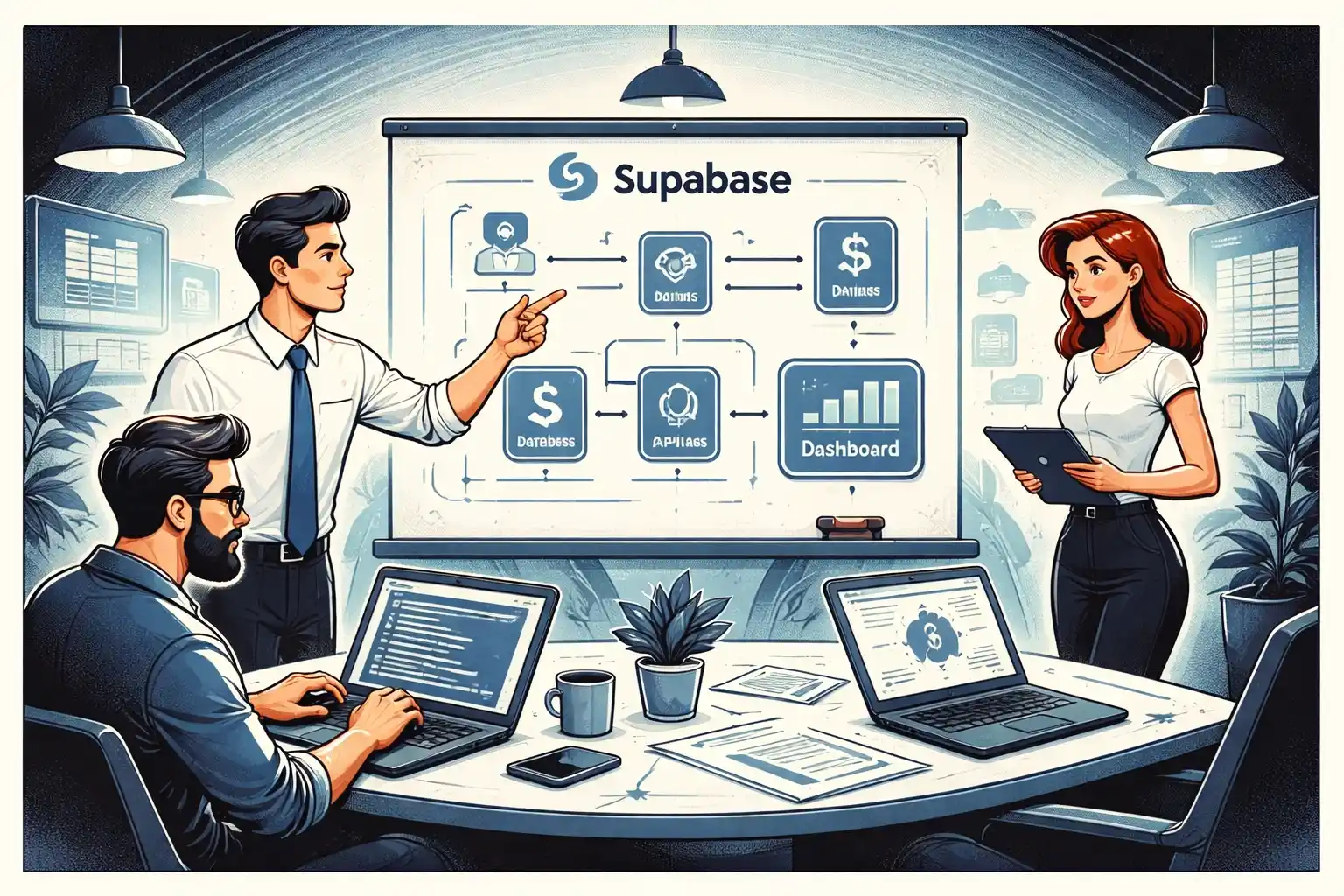

Phase 3: Build (short releases, not a long silent sprint)

Goal: ship a working product in increments.

What should happen:

- Build in weekly/biweekly milestones with demos

- Keep scope stable inside a sprint

- Use AI to speed up repeatable tasks (scaffolding, boilerplate, tests) while still doing real review

What you should see weekly:

- A working build you can click (staging)

- A short update: what’s done, what’s next, what’s blocked

- Any scope adjustments documented

If you’re deciding between building web vs mobile first, this helps frame the tradeoff: App Development Cost for Startups: Web vs Mobile vs SaaS.

Phase 4: QA (quality is not a “phase at the end”)

Goal: make the MVP stable enough for real users.

Good agencies treat QA as continuous:

- Basic test plan from week one

- Regression checks each release

- Bug triage with severity levels

What you should receive:

- A visible list of known issues and what’s postponed

- A “release checklist” before launch

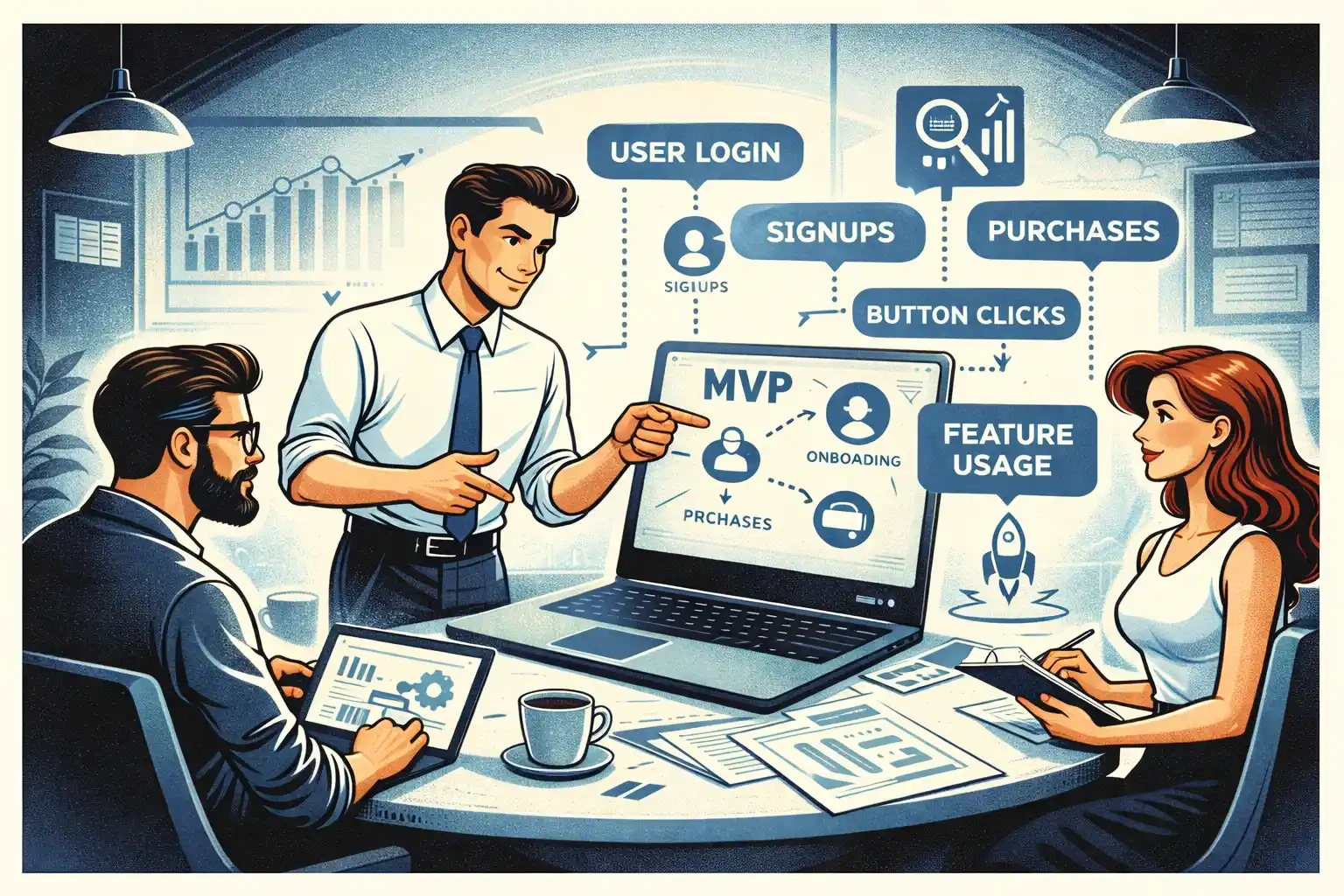

Phase 5: Launch and early traction (where MVP becomes real)

Goal: get the MVP into users’ hands and learn.

What should happen:

- Production setup (domains, environments, monitoring)

- Analytics events connected to a dashboard

- A first “7–30 day plan” for iteration (based on real usage)

What you should receive:

- Production release + rollback plan

- Basic metrics dashboard definition (what you’re tracking)

- A prioritized next-steps list based on early data

Founders often underestimate how important metrics are at this stage. If you want the investor-friendly view, read Your First Product Metrics Dashboard: What Early-Stage Investors Want to See.

The decisions you must make (or the agency will decide for you)

Even the best agency can’t save an MVP if the founder avoids decisions.

You must decide:

- Who the primary user is (one main persona for MVP)

- The single core action that defines “value”

- The MVP boundary (what’s excluded)

- Your release tradeoff: speed vs polish

If you don’t decide, the product becomes a compromise — and compromises rarely convert.

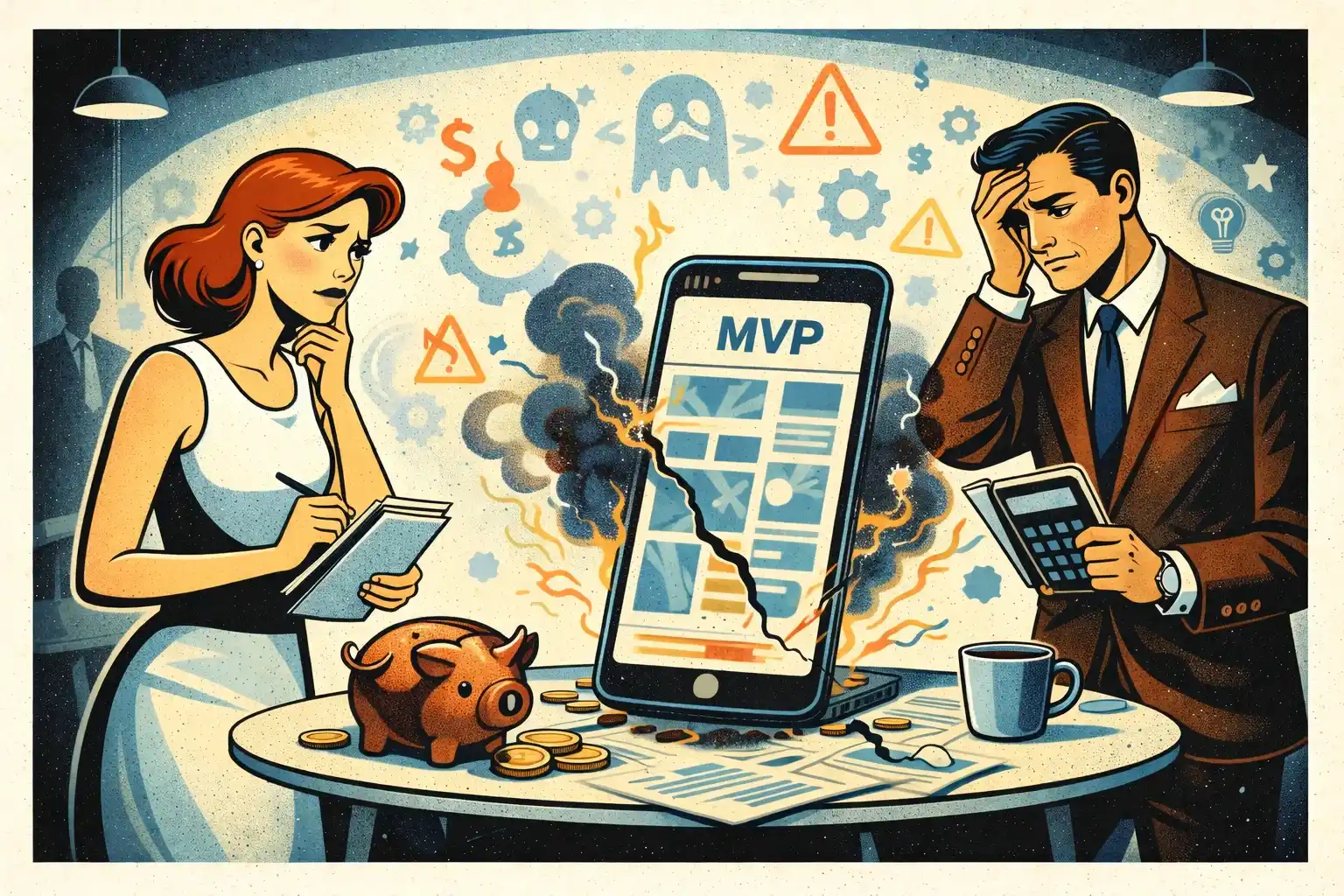

The most common agency traps (and how to avoid them)

Trap 1: Vague scope = endless tickets

If scope is not explicit, you’ll pay for “clarifications” forever.

Fix:

- Require an MVP scope list + exclusions

- Tie estimates to that scope

Trap 2: “Everything is easy” promises

When an agency never pushes back, they’re either inexperienced or incentivized to expand scope.

Fix:

- Ask what they would cut first and why

- Ask what could blow up time/cost

Trap 3: Big-bang delivery

Weeks of silence, then a giant handoff.

Fix:

- Require weekly demos and a staging build

- Track progress by working releases

Trap 4: No ownership clarity

If you don’t own repo access, design files, and environments, you’re locked in.

Fix:

- Confirm ownership in writing from day one

For a broader “what to watch for” guide, see Outsource Development for Startups: Pros, Cons, and Red Flags.

How AI changes the agency process in 2026 (the founder-friendly truth)

AI doesn’t replace the process — it compresses it.

What you should expect from a modern agency:

- Faster iteration on UI and small features

- Better documentation and clearer handoffs

- More time spent on decisions and quality, less on boilerplate

What you should not accept:

- Shipping unreviewed AI-generated code into production

- Using “AI speed” as an excuse for vague estimates

In other words: AI can help teams ship faster, but only disciplined teams ship better.

Thinking about building a web or mobile MVP in 2026?

At Valtorian, we help founders design and launch modern web and mobile apps — including AI-powered workflows — with a focus on real user behavior, not demo-only prototypes.

Book a call with Diana

Let’s talk about your idea, scope, and fastest path to a usable MVP.

FAQ

How long does an agency MVP usually take in 2026?

Many MVPs can ship in 4–8 weeks if scope is tight and decisions are fast. Complexity, integrations, and platform count (web + iOS + Android) can extend that.

What should I see each week while the agency builds?

A working staging build, a clear list of what shipped, what’s next, and any blockers. If you only get status messages, the process is weak.

Do I always need a discovery phase?

If your scope is already clear and validated, discovery can be short. But you still need MVP boundaries, risks, and metrics — otherwise you’ll pay for rework.

How do I prevent scope creep with an agency?

Agree on an MVP scope + explicit exclusions, freeze scope inside each sprint, and treat changes as a tradeoff (something is cut or timeline changes).

What do I own after the project ends?

You should own the code repository access, design files, environments, documentation, and any paid accounts created for the product.

What’s the biggest difference between good and bad agencies?

Good agencies push back, simplify, and ship in increments. Bad agencies accept everything, overbuild, and deliver late.

Can AI replace an agency process?

No. AI can speed up implementation, but it can’t define scope, product boundaries, or the right tradeoffs. You still need a real process and accountability.

.webp)

.webp)

.webp)