AI + Human Workflows in 2026: The Best Hybrid Pattern

Most AI products in 2026 don’t fail because the model is “bad.” They fail because teams try to automate too much too early, then get unreliable outputs, rising costs, and a support nightmare. The hybrid approach — AI + human workflows — is often the fastest path to a usable MVP and a scalable product. This article explains the best pattern: what AI should do, what humans should do, how to design handoffs, and what signals tell you it’s time to automate more.

TL;DR: The best hybrid pattern in 2026 is “AI drafts, humans approve, the system learns.” Keep humans in the loop on decision-heavy steps, automate the repeatable parts, and design explicit states for review and exceptions. Hybrid isn’t a temporary hack — it’s how you ship reliable value early, control costs, and scale without breaking trust.

Why hybrid workflows win in 2026

In 2026, AI can generate output quickly. That doesn’t guarantee:

- correctness

- safety

- consistency

- trust

Hybrid workflows win because they convert AI from a fragile “oracle” into a reliable production system.

The “best hybrid pattern” in one sentence

AI drafts - humans review - the product captures feedback - automation expands only where reliability is proven.

That’s it.

Everything else is implementation details.

If you’re deciding which AI features are worth shipping at all, start with AI MVP Features in 2026: What’s Worth Building.

Step 1: Identify the steps that should stay human

Humans should own:

- judgment calls

- policy decisions

- edge cases

- high-stakes outcomes

- trust-sensitive actions

Examples:

- approving content that could be unsafe

- deciding whether a user request qualifies

- interpreting ambiguous inputs

- signing off on recommendations that create liability

Founder rule: if a wrong decision creates real harm (financial, medical, legal, reputational), keep a human review step at MVP stage.

Step 2: Identify the steps AI should own

AI should own work that is:

- repeatable

- time-consuming

- low-risk when imperfect

- easy to validate with rules

Examples:

- summarizing inputs

- drafting messages

- extracting structured fields

- classifying tickets into categories

- ranking options with “confidence + reasoning”

When AI output is editable, the UX becomes resilient.

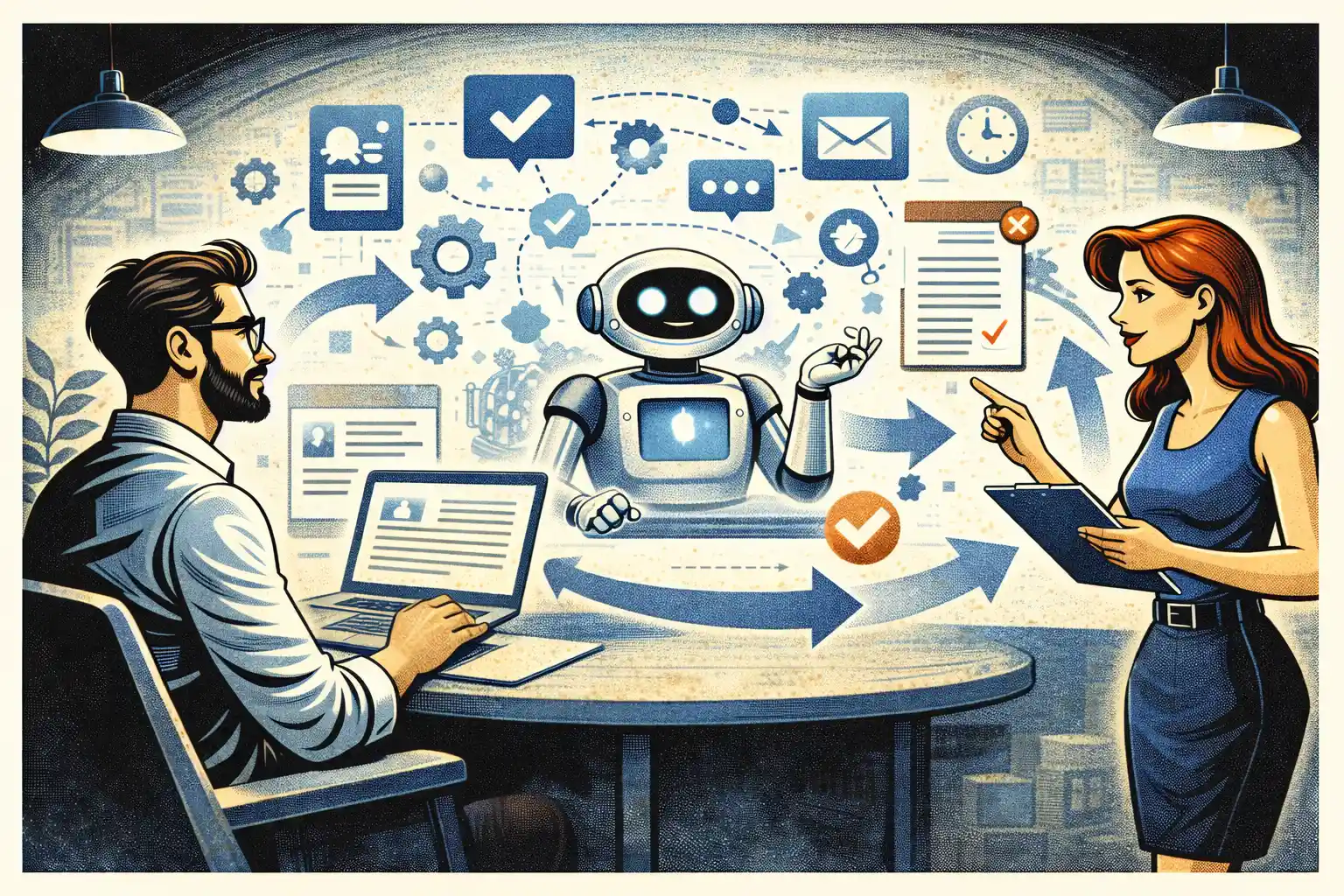

Step 3: Design the handoff as a product feature

Most hybrid MVPs fail at the handoff.

A good handoff needs:

- a clear queue (what needs review)

- consistent states (draft - in_review - approved - sent)

- one-click actions (approve, reject, edit)

- an audit trail (who did what)

If the handoff lives in Slack threads, you’ll never scale.

This is the same philosophy behind manual-first MVPs: software captures intent and state, humans handle uncertainty until the workflow stabilizes. See Manual-First MVPs in 2026: What to Do Before Automating.

Step 4: Add “confidence gating” (don’t treat all outputs equally)

A practical hybrid pattern in 2026 is confidence gating:

- High-confidence outputs can auto-apply.

- Medium-confidence outputs require light review.

- Low-confidence outputs require full manual handling.

This keeps the system fast without sacrificing trust.

The key is that confidence is not just a model score. You can create confidence signals from:

- rule checks

- input completeness

- similarity to known good cases

- successful history for this user segment

Step 5: Build the exception path first

The exception path is where trust is built.

Minimum exception handling:

- a visible “something went wrong” state

- a manual override option

- a way to request human help

- a reason code (so you can learn)

If you skip exceptions, support becomes your product.

If you want a reliability-first approach, this is the full guide: AI Reliability in 2026: How to Avoid Bad Outputs.

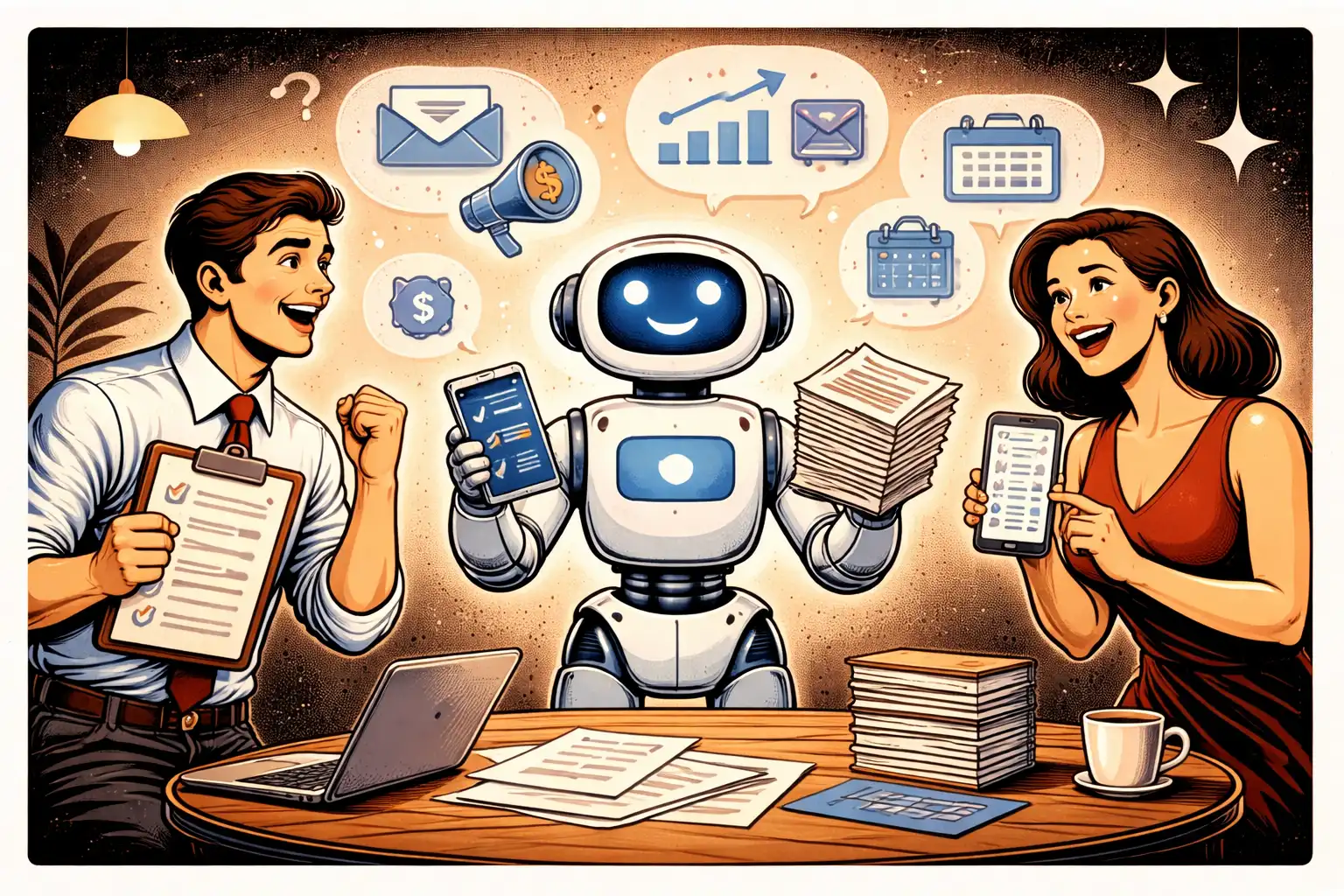

Step 6: Capture feedback that improves the system

Hybrid workflows are only powerful if you learn.

Capture feedback like:

- edits made by humans (before/after)

- reject reasons

- user satisfaction signals

- time spent per review

- error types

This turns human review into training data for process improvement (even without training your own model).

Step 7: Scale automation gradually (and only where it’s stable)

Automation should expand only when:

- the step repeats consistently

- the input format is predictable

- edge cases are categorized

- your “bad output” rate is low and recoverable

A practical sequence:

- automate drafting

- automate formatting + validation

- automate routing (who reviews what)

- automate happy-path execution

- automate more edge cases

Don’t jump to step 5.

The cost angle: hybrid often reduces spend

Counterintuitive truth: hybrid can be cheaper.

Why:

- fewer retries (humans correct once, instead of multiple model calls)

- smaller prompts (structured inputs)

- caching becomes easier

- you avoid automating rare edge cases

If you want the founder view of what drives spend, read AI Costs for Startups in 2026: What Drives Spend.

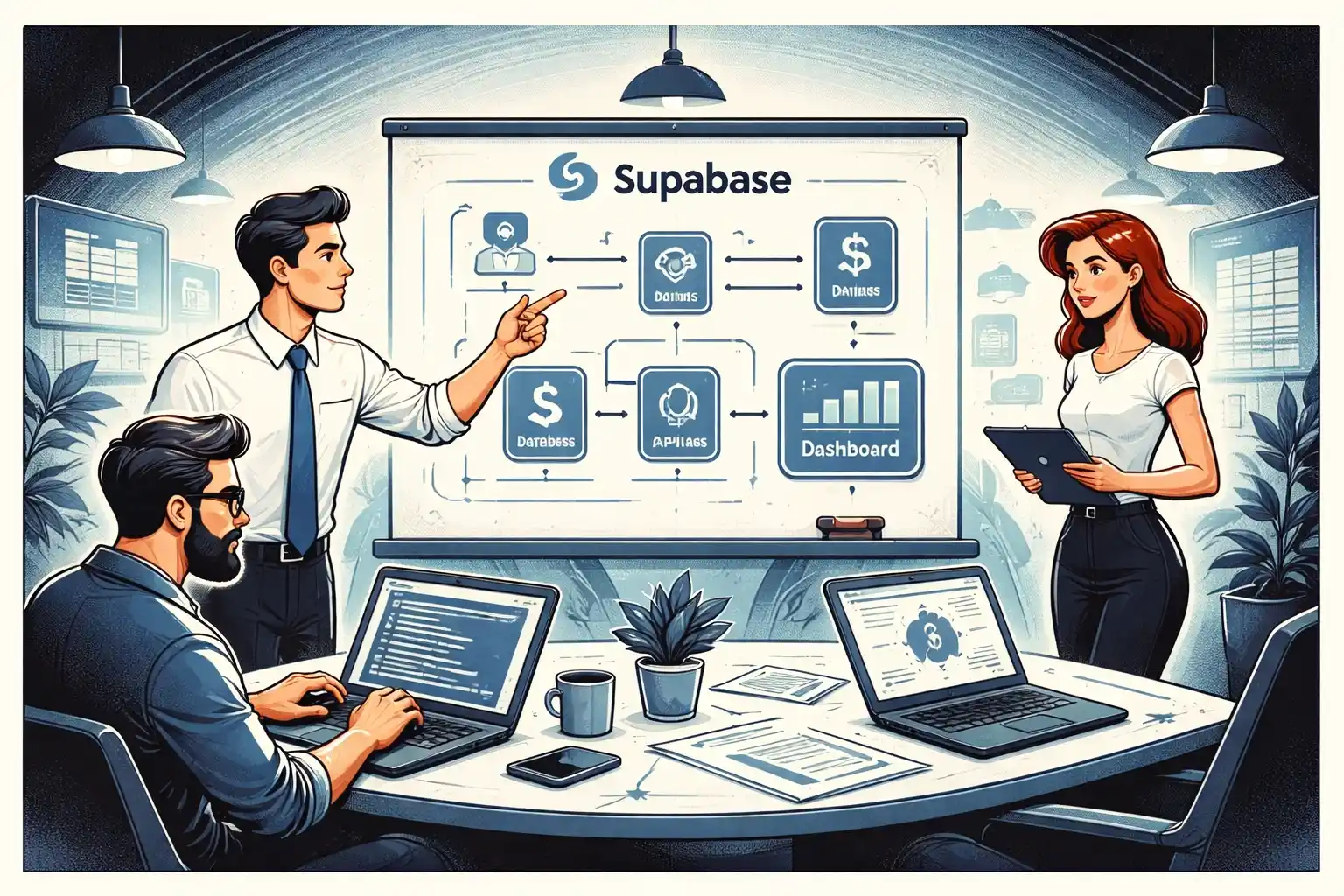

The MVP implementation: what you actually need

For most startups, the MVP hybrid system needs:

- a user intake flow

- a review queue for humans

- structured AI output

- simple validation rules

- an admin view

- basic analytics events

If you’re building on Supabase or similar, clean permissions and server-side actions are essential because review tools often expose sensitive data. See Supabase MVP Architecture in 2026: Practical Patterns.

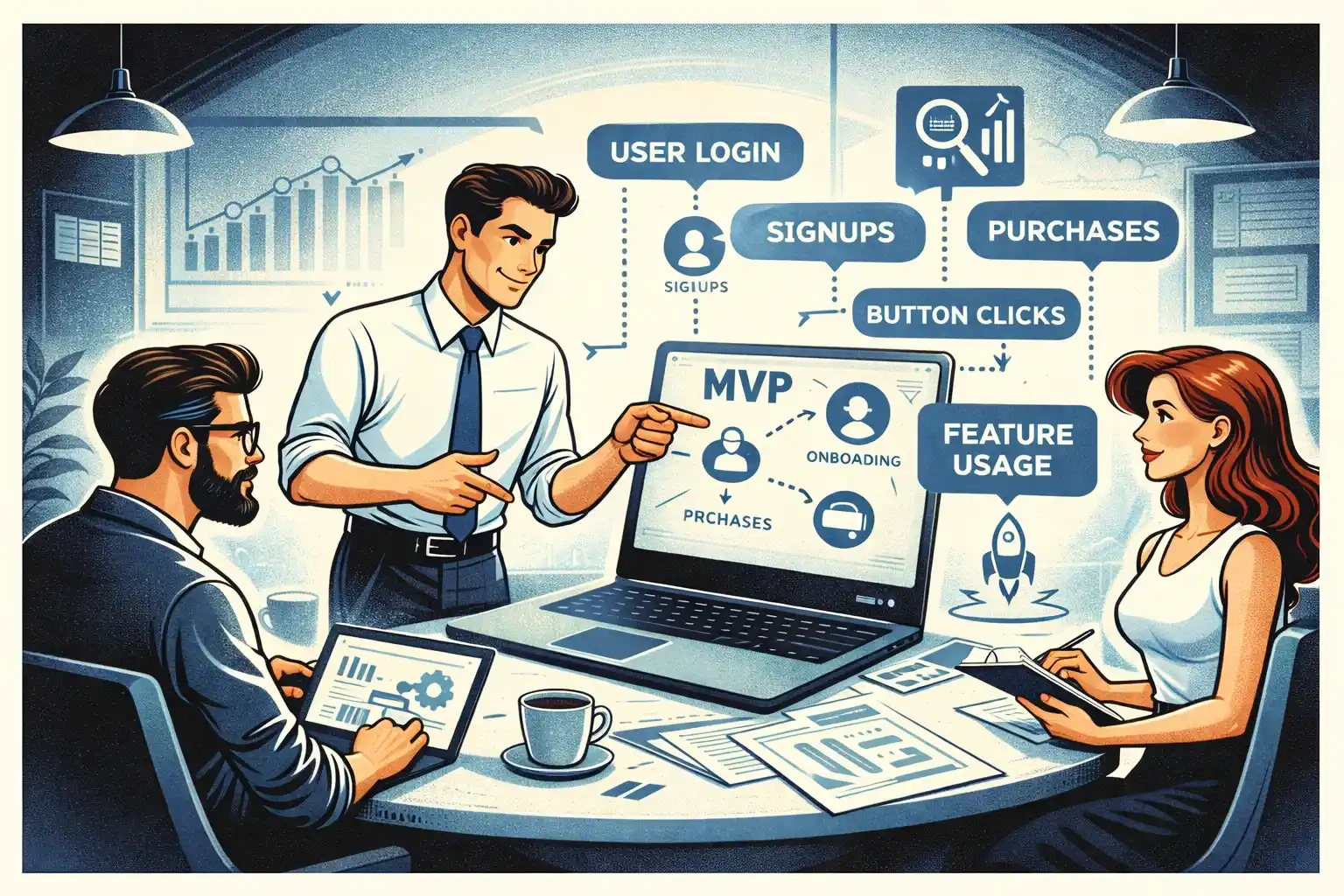

Measure what matters in hybrid workflows

You’re optimizing for outcomes, not “AI usage.”

Track:

- time-to-value

- approval rate

- correction rate (how often humans edit)

- exception rate

- cost per successful outcome

- user satisfaction signals

If you want a lean event plan, use MVP Analytics in 2026: Events to Track Early.

How to test hybrid workflows (founder-led)

Hybrid systems are UX systems.

You need to watch:

- how users interpret AI drafts

- whether review feels fast or annoying

- where humans spend time

- what breaks trust

Run founder-led sessions weekly. This setup helps: Founder-Led MVP Testing in 2026: A Practical Setup.

Thinking about building an AI-powered workflow in 2026?

At Valtorian, we help founders design and launch modern web and mobile apps — including AI-powered workflows — with a focus on real user behavior, not demo-only prototypes.

Book a call with Diana

Let’s talk about your idea, scope, and fastest path to a usable MVP.

FAQ

What’s the best hybrid pattern for an AI MVP?

AI drafts the output, a human approves or edits it, and the product captures feedback to improve the process. Automate only the steps that are stable and repeatable.

When should humans stay in the loop?

When decisions are high-stakes, inputs are ambiguous, or wrong outputs would break trust. Keep review for edge cases and policy-sensitive actions.

How do I prevent hybrid workflows from becoming “manual forever”?

Define automation triggers: repeatability, low correction rate, categorized edge cases, and clear success metrics.

How do I design a good handoff?

Use explicit states, a review queue, one-click actions, and an audit trail. Treat the handoff like a core product feature

Does hybrid increase costs?

Not always. Hybrid can reduce retries, reduce token bloat, and avoid automating rare edge cases. Measure cost per successful outcome.

What should I measure to improve the hybrid system?

Correction rate, approval rate, exception rate, time-to-value, and user satisfaction. These metrics tell you where to automate next.

How do I test hybrid workflows quickly?

Run weekly founder-led sessions with real users and reviewers. Watch where trust breaks and where humans spend time, then ship 1–3 fixes per cycle.

.webp)

.webp)

.webp)